Introduction to JTELSS26

Description

If you are curious about the EATEL summer school on Technology-Enhanced Learning and would like to learn more about this event, this short session will answer many questions and help you take a decision to participate! We will briefly present the academic program, the logistics of the event, the application and registration process, the participation fee, and share some of our experience. There will be time to ask any questions you might have about the event.

Facilitator

Mikhail Fominykh is the general chair of the EATEL summer schools of Technology-Enhanced Learning.

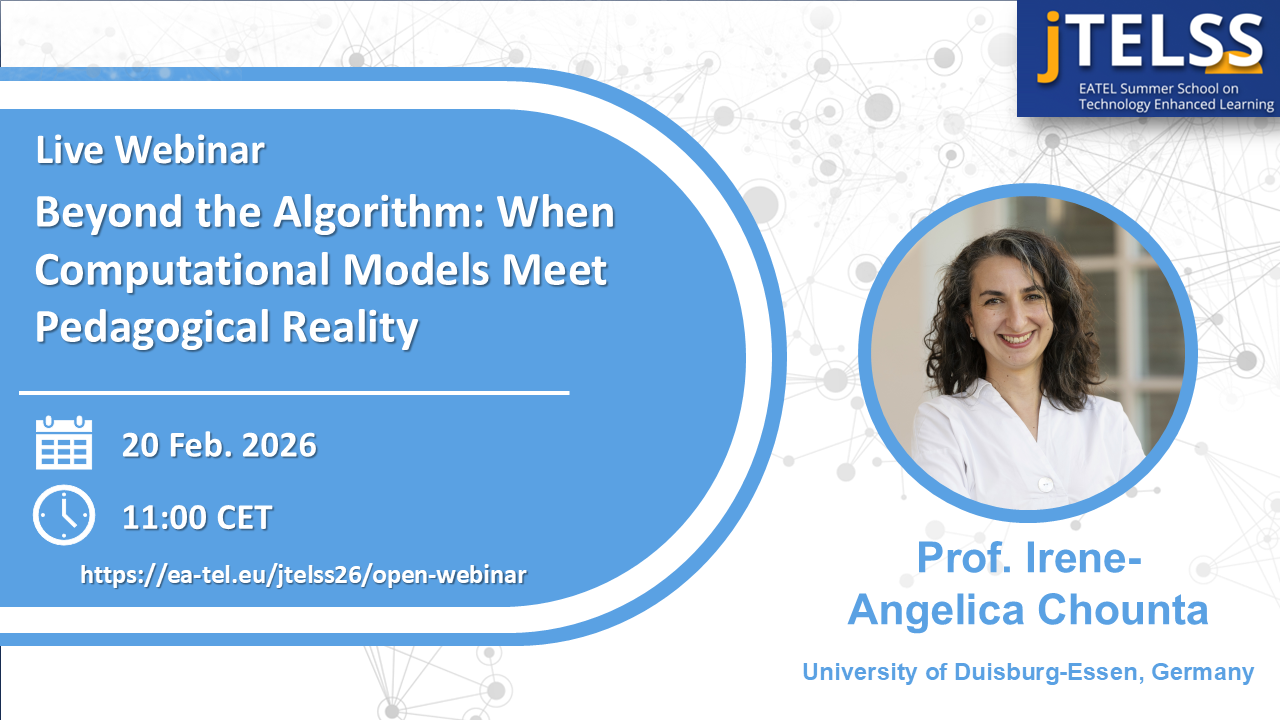

Webinar session 1: Beyond the Algorithm: When Computational Models Meet Pedagogical Reality

Description

We are standing at a critical point: we have unprecedented computational power to model learning, yet many systems optimize for prediction accuracy and performance rather than pedagogical impact. As generative AI and LLMs enter educational spaces, we risk amplifying the following disconnect: our computational power grows exponentially, but our attention to how learning happens - to the messy, temporal, social, developmental processes that make learning meaningful - becomes inversely proportional. In other words, we risk creating a generation of educational technologies that are:

- Computationally sophisticated but pedagogically shallow: Systems that can process vast amounts of data but lack grounding in how humans learn, develop, and make sense;

- Predictively accurate but developmentally blind: Models that forecast performance on predetermined tasks but cannot support the uncertain, exploratory, and potentially inefficient processes through which learning happens;

- Efficient but inequitable: Systems that optimize learning pathways for those who already match the patterns in training data, while providing "personalization" to others that actually means "remediation to fit the norm".

How do we ensure that our computational sophistication serves learning science? Are we preparing for better learners, or just better predictors? This talk argues for a "pedagogically-aware computing" paradigm where:

- Uncertainty becomes opportunity for learning

- Temporal dynamics inform real-time intervention

- Computational models remain grounded in theories of learning that center human development

Facilitator

Irene-Angelica Chounta holds a Professorship on Computational Methods in Modeling and Analysis of Learning Processes in the Department of Human-Centered Computing and Cognitive Science, University of Duisburg-Essen and she is the head of the research group colaps. Her research focuses on computational learning analytics (LA) for technology-enhanced learning (TEL), artificial intelligence in education (AIED) and educational technologies. Her main research interest is to model learners’ behavior in order to provide evidence-based, adaptive and personalized feedback, in a variety of contexts: from intelligent tutoring systems and computer-supported collaborative learning environments to hackathons and makerspaces. In 2019, she was awarded a four-year start-up grant from the Estonian Research Council for her research on “Combining machine learning and learning analytics to provide personalized scaffolding for computer-supported learning activities”. Irene was born and raised in Athens, Greece and studied in the University of Patras. She has lived and worked in USA (Carnegie Mellon University), Germany (University of Duisburg-Essen), and Estonia (University of Tartu). Currently, she serves as an expert consultant in topics about the use of Artificial Intelligence in Education for the Council of Europe and as a member of the Executive Board of the IAIED Society. In the past, she served as the Communications Co-Chair for the International Society of the Learning Sciences (ISLS), and she is an active member of the Society for Learning Analytics Research (SoLAR).

About me

I believe that education is the answer to many questions, along with kindness and understanding. I also believe in working together to achieving common goals for the common good. My overarching goal is to empower humans to aim for their dreams and beyond of what is "possible" and to use my privilege and power to provide opportunities and support those around me – especially, youth from underrepresented groups and minorities. Everyone has a right to education.

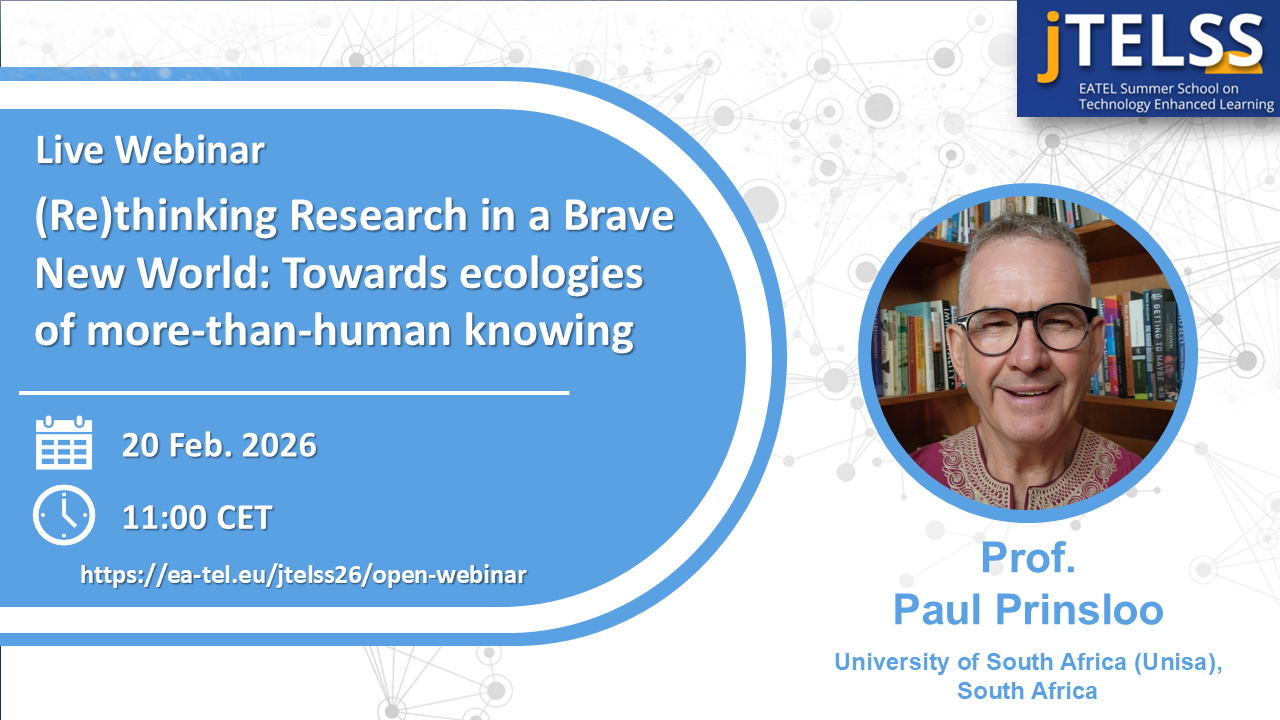

Webinar session 2: (Re)thinking Research in a Brave New World: Towards ecologies of more-than-human knowing

Description

Artificial Intelligence (AI), as a set of technologies and as imaginary, has become "the new magnetic field of all earthly existence" (Mbembe, 2022) creating a “new common world, a new common sense, and new ordinations of reality and power” (Mbembe, 2024). How do we understand this new common sense and how do these systems of reality and power function in the context of scientific research? We are swamped with tools that offer to summarise long documents, that offer personalised recommendations and answers even before we ask. We are invited to abdicate choices and tasks to agents who can work faster than us, getting more done in a day, allowing us to do more of the things we want to do but never have the time to do them. We don't have to read articles but are offered summaries. Problem statements, research questions, research designs and analyses are available at the formulation of a prompt. The immediacy of algorithmic tools such as Large Language Models (e.g., ChatGPT, Gemini, etc), offers to spare us the burden and the responsibility to interpret, evaluate and narrate our own, unique understanding of events as we, often mindlessly, copy and paste the answers received from a machine-brain.

How do we engage with AI in our research without giving away our voice, our ability to affectively and materially engage with the world around us in all its complexity?

There are many possible ways to make sense of research in the context of the new common, and the new arrangements of power, reality and meaning. For example, we can see AI as ontological and epistemic infrastructure and like all infrastructure, it makes some things possible, selectively foreground some things and hide others, and (increasingly automatically) perform actions without transparency, and/or accountability. Infrastructure is political. It has always been political. Infrastructure is actively performing the wishes and dreams of its designers. We can also see AI as reconfiguring and participating in ecologies of being, knowledge, coming-to-know and knowing. This lens decenters the human as centre and apex of this planet and acknowledges that we share cognition and sense-making with non-human actors, realms and relationships. AI as epistemic infrastructure and AI as part of ecologies of being, knowledge, coming-to-know and knowing are, although distinct, possibly overlapping and the nexus provides emergent and interesting potential for research agency and responsibility and a renewed commitment to accountability. Key takeaways of the presentation include, inter alia:

- The importance of understanding how knowledge and being human are reconfigured, automated and packaged in service of extractive commercial interests.

- The value of a transparent, ethical, and critical use of AI for your reputation, voice and career as researcher.

- The reality of cognitive offloading and skills atrophy.

- The burden and responsibility inherent in making knowledge claims.

- What to consider when you use AI in your research.

Facilitator

Paul Prinsloo is a Professor Extraordinaire in the Department of Business Management, College of Economic and Management Sciences, University of South Africa (Unisa). He was a visiting professor at the National Open University of Nigeria (NOUN) for the period 2023-2025, is a member of the Center for Open Education Research at the Carl von Ossietzky University of Oldenburg (Germany), a Senior Fellow of the European Distance and E-Learning Network (EDEN), an International Advisory Board Member of the Open University of Malaysia (OUM) and a Global Fellow of OUM's Centre for Digital Education Futures (CENDEF). Paul has published numerous articles in the fields of Artificial Intelligence (AI) in the context of teaching and learning, student success in distributed learning contexts, the ethical collection, analysis, and use of student data in learning analytics, and digital identities.

About me

I did not choose my gender, my race, my parents, my culture, the country in which I was born in, and all of the privileges, responsibilities and/or penalties that accompanied these. Looking at the choices I had and made (some good, some bad), as well as those serendipitous moments for which I did not ask for, but yet, somehow, provided me with opportunities unheard and unimagined of, I can only fall silent and in awe. Three things characterise my life namely a sense of awe, curiosity and trouble. My research serves all three.